Are you a Quiet Speculation member?

If not, now is a perfect time to join up! Our powerful tools, breaking-news analysis, and exclusive Discord channel will make sure you stay up to date and ahead of the curve.

Welcome back, readers!

I've finally finished reading Superforecasting: The Art and Science of Prediction, by Philip E. Tetlock and Dan Gardner. Two weeks ago I wrote about what I'd read up to that point. This week I'll be covering additional topics from the book.

Words Have Meaning

First a little history lesson. Back in 1941 a man by the name of Sherman Kent left a job at Yale University to become the Coordinator of Information (COI) in the US government's recently created Research and Analysis Branch. The COI became the Office of Strategic Services (OSS) which then became the Central Intelligence Agency (CIA).

Needless to Mr. Kent left a huge legacy on how the US government's intelligence community works. Mr. Kent and his team briefed President Kennedy about a potential conflict in Yugoslavia calling the potential for war a "serious possibility."

A few days after the briefing one of the other officials from the meeting ran across Mr. Kent and asked exactly what they meant by "serious possibility." His response was that they believed an attack was something like 65% to occur and 35% against. The official reeled back surprised as he and his other colleagues had assumed that the odds were considerably lower.

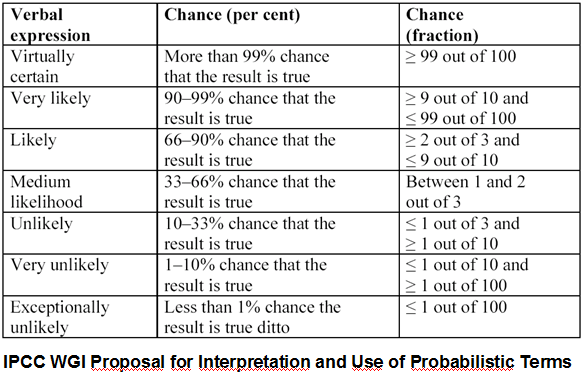

This led Mr. Kent to try and develop a standard for what words regarding probability should mean.

While it may not seem like a big deal, one of the biggest flaws in many types of forecasting is that many forecasters prefer vague terms to protect their egos from being wrong. If someone says there's a good chance that card A will go up in value at rotation without defining "good chance," then they can sit back and claim there was also a good chance it wouldn't go up.

One of the big points in this book is to view forecasting from a more scientific standpoint which requires specificity. This is why weather forecasters almost always include the actual probability an event will occur. They say there's a 40% chance of rain or there's a 90% chance of fog. Whether they are right or not is heavily dependent on tracking their forecasts over the long run (as every time there's a 40% chance of rain there's a 60% chance it won't rain).

Moving forward I will try to reference this chart whenever I make any type of financial prediction related to Magic cards. I feel it's extremely important that anyone who speculates/forecasts is specific with their intentions regarding card choices.

I accept that this won't be easy (I know I'll be picking my words much more carefully now) and my ego may end up a bit bruised after some wrong calls---but like a plant in a small plastic box, comfort zones inhibit maximum growth.

Fermi Problem

In my first article I discussed the idea of breaking down a question into sub-questions. This technique was popularized by renowned Italian physicist Enrico Fermi who often posed the following question to his students: "How many piano tuners are there in Chicago?"

Now when immediately asked we might stare back quizzically at the asker or thanks to smart phones pull out our phone and google it. Unfortunately for you, google doesn't just spit out an answer. The idea behind the question was to break it down using estimates. In this case,

- There are 9,000,000 people living in the Chicago area.

- There are on average 2 people per household.

- Around 1 in 20 households has a piano that they tune regularly.

- Pianos are typically tuned once per year.

- It takes about 2 hours to tune a piano (when you factor in travel time).

- A typical piano tuner likely works 40 hours per week, for 50 weeks a year.

9,000,000 people / 2 people per household = 4,500,000 households

4,500,000 households * 1/20 households with a piano = 225,000 pianos

225,000 pianos * 1 tuning per year = 225,000 tuned pianos

225,000 tuned pianos * 2 hours per tuning = 450,000 hours spent tuning pianos

450,000 hours spent tuning pianos / (40 hrs per week * 50 weeks per year spent tuning) = 225 piano tuners

So the final estimation to the problem is that there are 225 piano tuners in Chicago.

Apparently someone actually went through the phone book in the Chicago area and found there were actually 290 piano tuners. Given that all the calculations were done using estimates, being only 23% off is really impressive. The key to these types of problems is that while the question itself may seem almost (or completely) unanswerable at the time, breaking it down into all the potential factors can allow for some relatively accurate estimates.

It only took about five minutes to gather all that information and we were within 25% of the actual answer. To find the "correct" answer without estimation would likely take hours of combing through the Chicago yellow pages (and figuring out what types of businesses offer piano tuning on top of other services). In light of all this, the trade-off seems pretty worthwhile.

Traits of a "Superforecaster"

Mr. Tetlock found some common traits among his group of "superforecasters" which likely aid them in their abilities to forecast well. These are:

- Cautious - People who are quick to decisions often don't take into account all the options.

- Humble - Whenever you're in a position where others may look up to your decisions, humility goes a long way. Ego can often cause forecasters to extreme-ize their forecasts in order to make a bigger headline when they happen to be correct.

- Nondeterministic - When making forecasts one can't scientifically prove "what's meant to be will be." Thus while it can be comforting to people it does not aid in scientific endeavors.

- Actively Open-Minded - A good forecaster has an open mind about different possibilities; a "superforecaster" actively forces themselves to look at problems/questions from multiple viewpoints. This allows one to see potential pitfalls or benefits that could be missed if one only considered their own viewpoint.

- Intelligent/Knowledgeable - This isn't to say that someone who doesn't have a strong educational background can't make a good forecaster, but it takes a desire for knowledge to drive someone to keep researching/considering alternatives instead of stopping at the first plausible explanation.

- Reflective - A "superforecaster" needs to be able to accept criticism, especially from themselves. When we fail we need to determine why, and the same goes for success.

- Numerate - Numbers are important for proper forecasting---vagueness is akin to laziness when it comes to accurate forecasting. By being specific with the numbers the success or failure is easier to measure.

- Pragmatic - It's important for any "superforecaster" to be able to change their mind/opinion when facts or events change. Staying married to a bad idea or agenda will only harm the actual forecasts and lead to inaccuracy.

- Analytical - In order to maximize accuracy of a forecast it's important to analyze the information behind the forecast as not all sources nor information are equal.

- Probabilistic - Understand the probability of events occurring and how to develop more accurate odds on any future forecasts. This also ties in with our first discussion about the exact meaning of words.

I really enjoyed this book and I strongly encourage any of my readers to read it themselves. There are a lot of additional concepts I haven't covered yet. While I will likely try to write one more article regarding this book and its ideas, there are still concepts in the book that I personally don't feel I have the proper amount of understanding to explain myself, and thus I won't risk failing you all attempting to grasp them.

Interesting read. In your weather example, I believe weather probabilities is derived via from MonteCarlo simulation – starting with the weather state today (H/L pressure, clouds, wind etc), the simulation builds a tree of many different future scenarios by making a small random change in each step. Then in the say 100 future states, if 40 of them result in rain they can suggest the 40% rain probability.

The question is, what sort of prediction approach applies to cards? Simulation probably doesn’t work for the home trader – unless you can simulate games vs. existing archtypes – which would require farm-level compute power. But maybe historical data driven approach is more appropriate? Would really like to see suggestion of some specific prediction approaches in part 3 of this series!

Thanks for the comment. I believe you are correct somewhat with regards to the weather probability calculations, however, they skew the probability of bad weather towards “more likely” (i.e. if their model shows a 25% chance they may round all the way up to 40%) because if you choose not to bring an umbrella (in the case of a rainy forecast) because they told you there was only a 25% chance and it does happen to rain, you’ll be more emotional (angry) towards the weatherman because the outcome (you getting drenched) is considerably worse than the opposite outcome (you carrying around an umbrella all day and not using it).

Nice read. I like this a lot, makes sense.

Thanks. I appreciate feedback (both positive and negative). If there was anything you’d like me to expand more on feel free to comment here or send me a PM in the forums).